Simulating the Finals for 2013 : Pre-Week 2

/Simulating the Finals for 2013 : Pre-Week 1

/Simulating the Finalists for 2013 : Post Round 22 - An Alternative Approach

/Simulating the Finalists for 2013 : Post Round 22

/Simulating the Finalists for 2013 : Post Round 21

/Simulating the Finalists for 2013 : Post Round 20

/Simulating the Finalists for 2013 : Post Round 19

/The 2013 Imbalanced Draw: Who's Sorry Now?

/Simulating the Finalists for 2013 : Post Round 18

/The Seasons We Might Have Had So Far in 2013

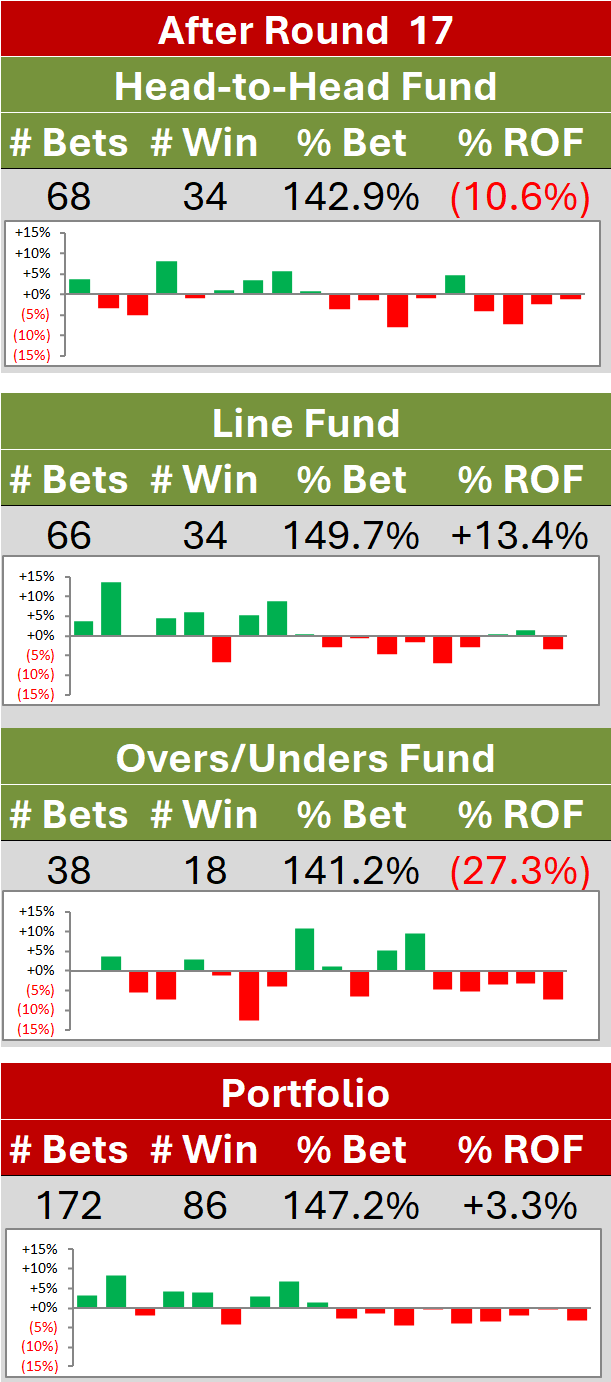

/Simulating the Finalists for 2013 : Post Round 17

/Simulated Performance of Head-to-Head Algorithm vs TAB Bookmaker

/In the previous blog I reported that:

the TAB Bookmaker can be thought of as a Bookmaker with zero bias and a 5-5.5% sigma, and the Head-to-Head Probability Predictor can be thought of as a Punter with a +1-2% bias and a 10-12% sigma

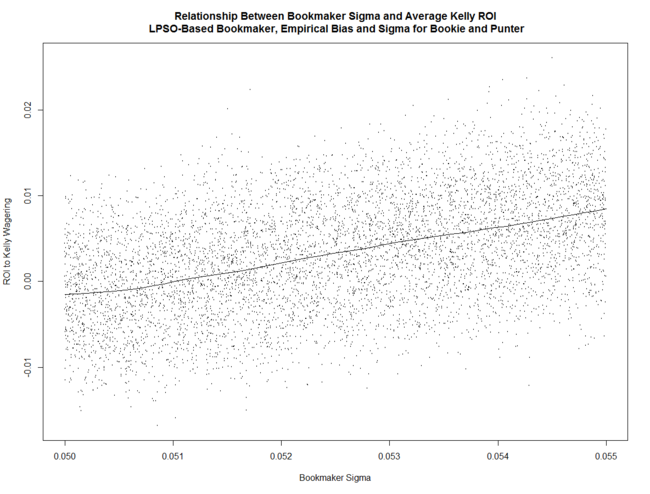

Simulating these parameter ranges, with an LPSO-like Bookmaker and with his overround varying in the range 5% to 6.5%, reveals that the return to Kelly-staking for a Punter with Bias and Sigma in these ranges (who, unlike MAFL's Head-to-Head Fund, wagers on Away as well as Home teams) is positively related to Bookmaker Sigma ...

(Note that if Bookmaker Sigma is around 5% the expected return to Kelly-staking is negative.)

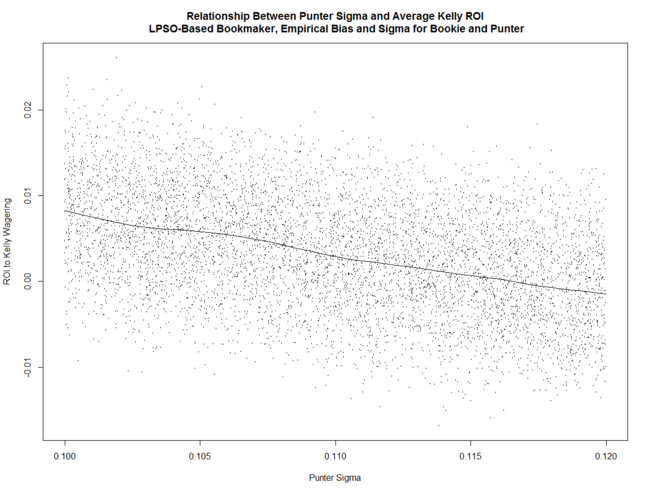

The Punter's ROI is negatively related to his Sigma ...

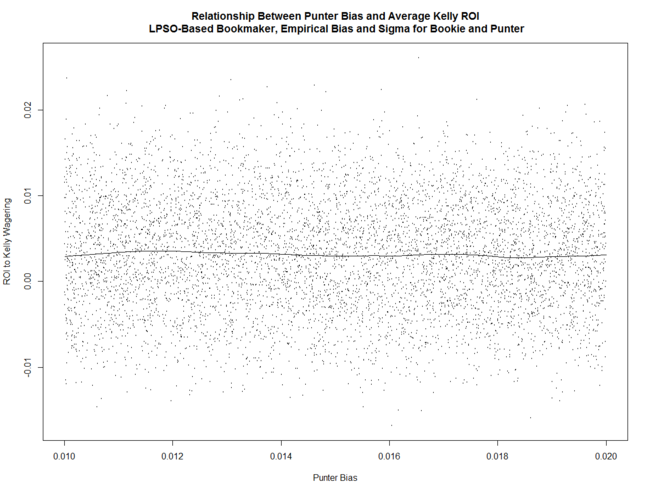

... and pretty much unrelated to his Bias.

... and pretty much unrelated to his Bias.

These relationships are all similar to what we found in earlier blogs in which the parameter space investigated was much larger.

What's also interesting is that the variability of the returns to Kelly-staking is positively related to Bookmaker Sigma, broadly unrelated to Punter Sigma, and negatively related to Punter Bias.

(Note that these images can be clicked for larger versions.)

We also find that, for every set of parameters in the simulation, the expected return to Kelly-staking exceeds that for Level-staking, which is broadly consistent with what we found when exploring the larger parameter space, but the correlation between the average Log Probability Score and the ROI to Kelly-staking is, in absolute terms, always lower than the correlation between the average Brier Score and the ROI to Kelly-staking, which is contrary to what we found when exploring the larger scenario space.

Using RWeka to create simple rules for when to Kelly-stake and when to beat a considered retreat from wagering, the first few rules we're offered are:

- If the Total Overround is less than 5.88% and the Bookmaker Sigma exceeds 5.24%, then the best strategy is to Kelly-stake, otherwise

- If the Total Overround is less than 5.63% and the Punter Sigma is less than 11.00%, then the best strategy is, again, to Kelly-stake, otherwise

- If the Total Overround is greater than 6.18% and the Bookmaker Sigma is less than 5.27%, then the best strategy is not to bet, otherwise

- If the Total Overround is greater than 5.34%, the Punter Sigma is less than 10.73%, and the Bookmaker Sigma exceeds 5.14%, then the best strategy is to Kelly-stake, otherwise

- If the Total Overround is less than 5.44%, then the best strategy is to Kelly-stake

For about 70% of the remaining scenarios the recommendation is not to bet.

Using Eureqa's Formulize to build a model of the ROI to Kelly-staking, we find that one of the best-fitting models, with an R-squared in excess of 85%, is:

- Expected Kelly ROI = 2.143*Bookie Sigma - Bookie Total Overround - Bookie Sigma*Punter Bias - 0.463*Punter Sigma

This suggests that, as we've found previously, the ROI to Kelly-staking is heavily dependent on the Bookmaker's precision and, to a lesser extent, on the Punter's. As well, the ROI to Kelly-staking drops percent-for-percent with the Bookmaker's Total Overround.

And, finally, using Eureqa's Formulize to build a model of the standard deviation of the ROI to Kelly-staking, we find that one of the best-fitting models, but with an R-squared of only around 16%, is:

- Expected SD Kelly ROI = 0.067 + 0.415*Bookie Sigma - 3.600*Punter Bias*Bookie Total Overround

So, not only does the expected return to Kelly-staking rise with the Bookmaker's Sigma, so too does the variability of that return. In addition, variability falls with Punter Bias and with the Bookmaker's Total Overround.

SUMMARY

This blog addresses Bookmaker vs Punter scenarios that we are, based on empirical data for the TAB Bookmaker and MAFL's Head-to-Head Fund algorithm, most likely to encounter in practice, and shows that there is a fairly narrow range of scenarios - where Bookmaker Sigma is sufficiently high, and Bookmaker overround and Punter Sigma are sufficiently low - for which the expected profit to Kelly-staking is positive.

It also suggests that, within the range of parameter values explored, the variability to Kelly-staking grows with Bookmaker imprecision and shrinks with the product of Punter Bias and Bookmaker Overround.

Empirical Bias and Sigma for Selected Probability Predictors

/Bookmaker vs Punter Simulations Revisited : Risk-Equalising and LPSO-Like Bookmakers

/2012 - Final Simulations : Round Up of Wagering Outcomes

/2012 - Final Simulations : Week 3

/2012 - Final Simulations : Week 2

/We lost Geelong and the Roos in Week 1 of the Finals and MARS re-rated the six remaining teams, which leaves us (using the same model that we used for Week 1 of the Finals) with the following team-versus-team probability matrix.

Broadly, Hawthorn is expected to beat everyone else fairly handily, except Sydney, which they're expected to beat less convincingly but still beat nonetheless.

Broadly, Hawthorn is expected to beat everyone else fairly handily, except Sydney, which they're expected to beat less convincingly but still beat nonetheless.

Using this probability matrix for 1 million simulations yields the following team-by-team Finals outcome probabilities:

Hawthorn are now estimated to win two-thirds of the time and to make the Grand Final almost 9 times in 10.

Hawthorn are now estimated to win two-thirds of the time and to make the Grand Final almost 9 times in 10.

Sydney are rated about 4/1 chances for the Flag but are about even money to play in the GF.

The remaining teams are mostly there to mop up the residual probabilities, with none rated better than about 30/1 chances for the Flag and about 5/1 or longer chances to even make the Grand Final. Adelaide, despite finishing 2nd on the ladder in the home-and-away season, are now rated only about 32/1 Flag chances and 15/1 chances of even making the Grand Final.

Simulated Grand Final quinella probabilities appear in this next table. A Hawks v Swans matchup is rated by far the most likely pairing, with a probability approaching 60%.

Simulated Grand Final quinella probabilities appear in this next table. A Hawks v Swans matchup is rated by far the most likely pairing, with a probability approaching 60%.

The next most-likely pairings are Hawks v Pies and Hawks v Eagles, which carry probabilities in the 10-15% range, then Swans v Freo and Swans v Crows matchups, which carry probabilities of around 5%.

Only four other pairings are possible, none of which include the Hawks or the Swans, and none of which are assessed by the simulations as being greater than about 1% prospects.

Amongst these quinellas only the Hawthorn v Sydney pairing at $1.90 offers any value on the TAB AFL Futures market. This wager is assessed as having a 14% edge.

Other TAB AFL Futures market wagers with a positive expectation this week are the Hawks to win the Flag at $1.65 (10% edge), the Hawks to play in the GF at $1.25 (7% edge), and the Swans to play in the GF at $1.55 (8% edge).

Adding these bets to those we've identified in AFL Futures markets in previous weeks yields the following picture:

As you can see, I've closed out those wagers whose fate has already been determined, the net return from which, it turns out, is marginally positive. The ROI from level-staking the identified opportunities is just over 1%.

As you can see, I've closed out those wagers whose fate has already been determined, the net return from which, it turns out, is marginally positive. The ROI from level-staking the identified opportunities is just over 1%.

Having locked in, last week, what look like very promising wagers on the Hawks and Swans at attractive prices on various AFL Futures markets, the current expectation must be for that ROI to grow.

At this point, however, all our eggs are very firmly in two baskets each, appropriately enough, avian based. We'll not know anything more about the fate of these wagers for at least another fortnight.

2012 - Final Simulations : Week 1

/The first task for this week was to create a model to estimate the probability that one finalist should beat another using only the knowledge of which team was at home and the competing teams' MARS Ratings. Fitting a binary logistic to historical data produced the following model:

Prob(Home Team Wins) = logistic(0.446518 + 0.034125 * Home Team MARS Rating - 0.034161 * Away Team MARS Rating)

Applying that model to the most recent team MARS Ratings yields the following team-versus-team probability matrix:

(Note that, using the fitted model, the probability that Team A defeats Team B when Team A is at home is not the complement of the probability that Team B defeats Team A when Team B is at home.)

Based on the early head-to-head prices for the weekend's Finals, it would seem that MARS rates the chances of the Hawks, Swans and Roos more highly, or rates the chances of the Crows, Pies and Eagles less highly (or both) than does the TAB Bookmaker. It also appears to rate the Cats' and Freo's chances about the same as does the TAB Bookmaker.

Using this probability matrix to simulate the 2012 Finals series 1,000,000 times yields the following team-by-team probabilities:

Hawthorn then are clear Flag favourites, with Sydney on the 2nd line followed by the Pies, Cats and Crows. The Eagles, Freo and the Roos are all rated as rank outsiders to collect the Flag.

Hawthorn then are clear Flag favourites, with Sydney on the 2nd line followed by the Pies, Cats and Crows. The Eagles, Freo and the Roos are all rated as rank outsiders to collect the Flag.

Comparing the probabilities in this table with the prices on offer at TAB Sportsbet suggests that the only wagers currently offering a positive expectation are for Hawthorn to win the Flag (28% edge) or to make the GF (11% edge), and for Sydney to win the Flag (5% edge) or to make the GF (24% edge). No other wagers on the Flag or Make the GF markets are worthwhile.

Turning next to potential GF pairings, the simulations yield the following.

Turning next to potential GF pairings, the simulations yield the following.

Based on these simulated results the only TAB GF Quinella prices offering value are Hawthorn v Sydney at $5.50 (54% edge), Sydney v Geelong at $26 (40% edge), Hawthorn v Kangaroos at $67 (26% edge) and Geelong v Kangaroos at $501 (58% edge). No other pairing has a positive expectation.

The top half dozen most common simulated GF pairings combined represent around 80% of total probability, which means that the remaining 20 potential pairings amongst them account for only 20% of total probability.

(Note that there are only 26 possible GF pairings not 28, because two possible pairings, those reflecting the Elimination Finals, cannot occur in the GF.)

As an interesting exercise, this week I decided to investigate the importance of teams' final ladder positions on their Finals aspirations. To do this I imagined that the teams in the top 8 had finished in a randomised order but with the same MARS' Ratings and hence probabilities of victory against any specified opponent.

I then simulated 1,000,000 Finals series, randomising the order for the top 8 teams for each simulation, and determined that, with randomised team order but with preserved team Ratings, the Flag probabilities would become Hawthorn 39% (down from 57%), Adelaide 6% (no change), Sydney 18% (no change), Collingwood 8% (no change), West Coast 8% (up from 2%), Geelong 13% (up from 8%), Fremantle 3% (up from 1%), and the Kangaroos 4% (up from 1%). Randomisation, it seems. serves mainly to redistribute probability from Hawthorn to the teams that finished in ladder positions 5 through 8.

The summary of this exercise if that, no matter where the Hawks had finished in the eight, on the basis of their MARS Rating superiority they'd still have been Flag favourites. Similarly, the Swans would have been 2nd-favourites regardless of their ladder finish, with Geelong, Collingwood, West Coast and Adelaide all forming the next tier of the market, Geelong foremost amongst them because of its superior MARS Rating. Not even randomisation of ladder finishes could substantially elevate the Flag potential of Fremantle and the Roos however.